Intuitive Interaction Interfaces for Elementary Mathematics

Objectives, achievements and challenges

Work Package 3 has a rather ambitious objective: The design and development of innovative and intuitive interaction interfaces for Elementary Mathematics, including voice and direct manipulation user interfaces.

The achievement of this objective results through the following steps:

- Provision of speech production and voice recognition software (to enable a more natural interaction of children with the system).

- Provision of a Graphical User Interface (GUI) framework to create intelligent-supported exploratory activities.

- Provision of a coherent visual interface, look and feel for the iTalk2Learn platform.

Progress

During the first year of the project, the working team on this work package focused on:

- Developing a common understanding about the possibilities afforded by the corresponding technologies.

- Gathering requirements for voice production and speech recognition.

- Reviewing the state-of-the-art for exploratory learning environments (ELEs) and voice user interfaces (VUIs).

- Identifying the technical design options for the subsequent development of the ELE.

As a result, we have begun operationalising requirements by:

- Working on voice interaction, through speech production and recognition software.

- Designing and developing the first prototype of the ELE, Fractions Lab. We selected a threefold framework for principled task design that would be utilised to ensure good progress when designing the ELE. It integrates design conjectures that arise from experience with related software, design drivers that arise from literature, and design assumptions that arise from the developers’ pedagogical approach.

Speech recognition and voice production

For the creation of a viable speech recognition and production system, we have collected speech data early in the iterative process cycle. Therefore, we initiated contacts with different schools in our target group, in order to be able to collect speech data from the iTalk2Learn target group.

The students were recorded while solving problems related to fractions. In order to further improve the quality of our recordings and transcription material, we have employed an iterative approach as a trial. In this approach, two partners from the working team send audio and initial transcripts with the XTRANS system to another partner and then awaited feedback from that partner. Once the process has come to an end, the partners begin to collect further speech data.

The iTalk2Learn ELE: Fractions Lab

The design of Fraction Lab has not been straightforward, and it requires a ‘back and forth’ process, which involves requirements gathering, development of features, and feedback from partners, teachers and students.

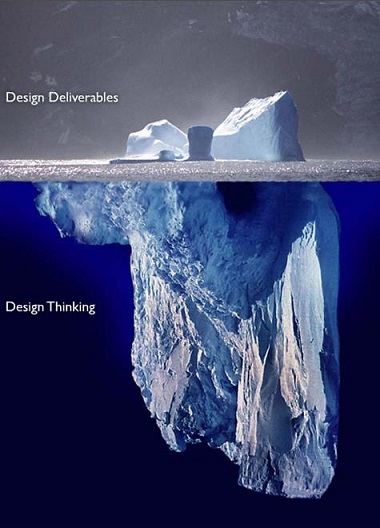

This activity resulted in a design document that was over 100 pages long. This can be compared to an iceberg, where the part that emerges is represented by the document and the submerged part is the design thinking.

More than half the work has already been done (layout, representation, and most of the functionalities are completed or in progress).

Figure 1 – Image from: dailygumboot.ca

Fractions Lab – a preview

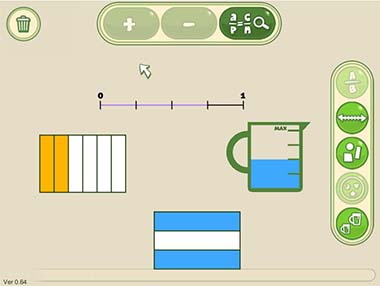

Fractions Lab is an exploratory learning environment that allows the students to:

- Visualize fractions.

- Discover the relationship between various fractions and their relation to the whole.

- Learn operations in a simple way.

Figure 2

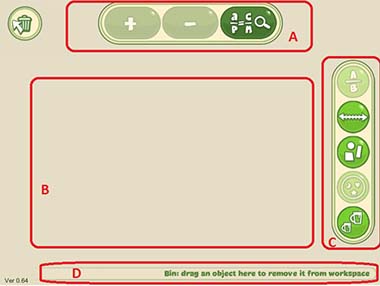

Users can select one of the five types of representations available (in the representation area [C]) (numeric, lines, liquid measures, geometrical shapes, patterns called “sets”), manipulate them in the Experimental area [B] and get tips, hints and feedback in area [D]. [Figure 3]

Figure 3

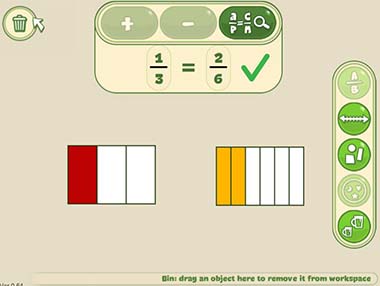

Moreover, users can drag and drop the representation displayed in the Experimental area into the Operations area [A] to practice with sum, subtractions and equivalence. [Fig.4]

Figure 4

More than one representation can be displayed at a time. [Fig.5]

Figure 5

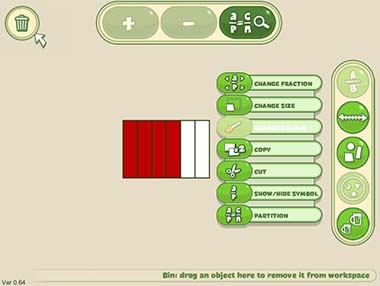

When the student (right) clicks on the visualised fraction, he/she will see a menu of tools that can be used to operate upon (or experiment with) the representations. [Fig.6].

Users will be able to:

- Change fraction denominator or numerator

- Change size

- Change colour

- Copy

- Cut the string and a selection of other representations

- Partition

Figure 6

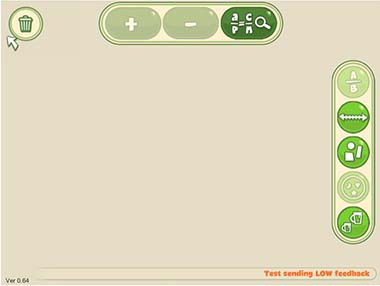

At the bottom [D] of the display area, hints, tips and feedback are presented to users. At this stage of development, different functionalities are available:

- Integrated hints (in green Fig 6): Give users a contextual help on selected functionality.

- Low interruptive feedback (in orange): Provides students with suggestions based on their learning process and comes from the iTalk2Learn platform (task dependent support). [Fig.7]

Figure 7

- High interruptive feedback: Gives feedback or messages to students that requires their total attention and a voluntary action to close it. [Fig.8]

Figure 8

Ongoing activities and plans for the future

Currently the members of the working group are engaged on many fronts.

On one hand, we are finalising development of Fractions Lab (implementing new actions, operations, tools and representations in particular). We will harmonise and enhance GUIs of the platform overall to create a coherent visual interface for the various user-facing components of the system.

On the other hand, we are working on the enhancement of the acoustic training environment, using affective features for ameliorating sequencing of tasks and we are studying how students react to the computer’s voice. During the second year, we envision that we will be able to increase the understanding by considering their behaviour while they are interacting with the learning support system. To do so, we will investigate machine learning models that extract from speech interaction the current affective state and context of the student.